I was inspired by Simon Willison‘s recent post on embeddings and decided to use them to explore some documents. I’d blocked out some time to do this, but ended up with decent results in just under an hour.

Introduction

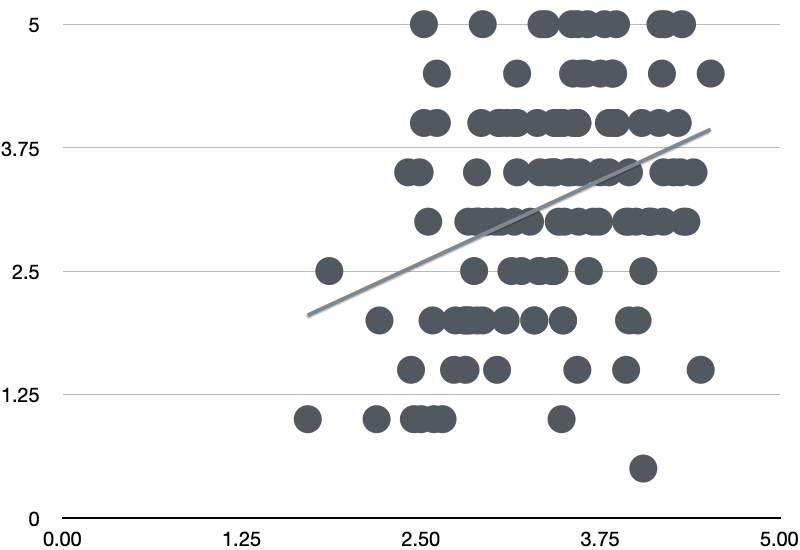

Embeddings are functions that turn pieces of text into fixed length multi-dimensional vectors of floating point numbers. These can be considered as representing locations a within multi-dimensional space, where the position relates to a text’s semantic content “according to the embedding model’s weird, mostly incomprehensible understanding of the world”. While nobody understands the meaning of the individual numbers, the locations of points representing different documents can be used to learn about these documents.

The process

I decided to go with the grain of Willison’s tutorials by setting up Datasette, an open-source tool for exploring and publishing data. Since this is based on SQLLite, I was hoping this would be less hassle than using a full RDBMS. I did a quick install and got Datasette running against my Firefox history file.

OpenAI have a range of embedding models. What I needed to do was to find the embeddings for my input text and send that to OpenAI’s APIs. I’m something of a hack with python, so I searched for an example, finding a detailed one from Willison, which pointed me towards an OpenAI to SQLLite tool he’d written.

(Willison’s documentation of his work is exemplary, and makes it very easy to follow in his footsteps)

There was a page describing how to add the embeddings to SQLLite which seemed to have everything I needed – which means the main problem became wrangling the real-world data into Datasette. This sounds like the sort of specific problem that ChatGPT is very good at solving. I made a few prompts to specify a script that created an SQLLite DB whose posts table had two columns – title and body, with all of the HTML gubbins stripped out of the body text.

Once I’d set up my OPENAI_API_KEY enviroment variable, it was just a matter of following the tutorial. I then had a new table containing the embeddings – the big issue being I was accidentally using the post title as a key. But I could work with this for an experiment, and could quickly find similar documents. The power of this is in what Willison refers to as ‘vibes-based search’. I can now expand this to produce a small piece of arbitrary text, and find anything in my archive related to that text.

Conclusion

Playing with embeddings produced some interesting results. I understood the theory, but seeing it applied to a specific dataset I knew well was useful.

The most important thing here was how quickly I got the example set up. Part of this, as I’ve said, it due to Willison’s work in paving some of the paths to using these tools. But I also leaned heavily on ChatGPT to write the bespoke python code I needed. I’m not a python dev, but genAI allows me to produce useful code very quickly. (I chose python as it has better libraries for data work than Java, as well as more examples for the LLM to draw upon).

Referring yet again to Willison’s work, he’s wrote a blog post entitled AI-enhanced development makes me more ambitious with my projects. The above is an example of just this. I’m feeling more confident and ambitious about future genAI experiments.