A new project at work has got me thinking about whether Java works as a language for AWS Lambda applications. The more I’ve looked into this, the more that my research has expanded and I’ve got a little lost in the topic. This post is a set of notes aimed to add some structure to my thoughts. In time, this may become a talk or a long piece of writing.

- The biggest issue with Java on lambda is that of cold starts. This is the initial delay in executing a function after it has been idle or newly deployed. This delay occurs while setting up the runtime environment. Given that Java platform requires a JVM to be set up, this adds a significant delay when compared with other platforms.

- Amazon evidently understand that cold starts are an issue, since they offer a number of workarounds, such as provisioned concurrency (paying extra to ensure that some lambda instances are always kept warm). There is also a Java-specific option, Snapstart, which works by storing a snapshot of the memory and disk state of an initialised lambda environment and restoring from that.

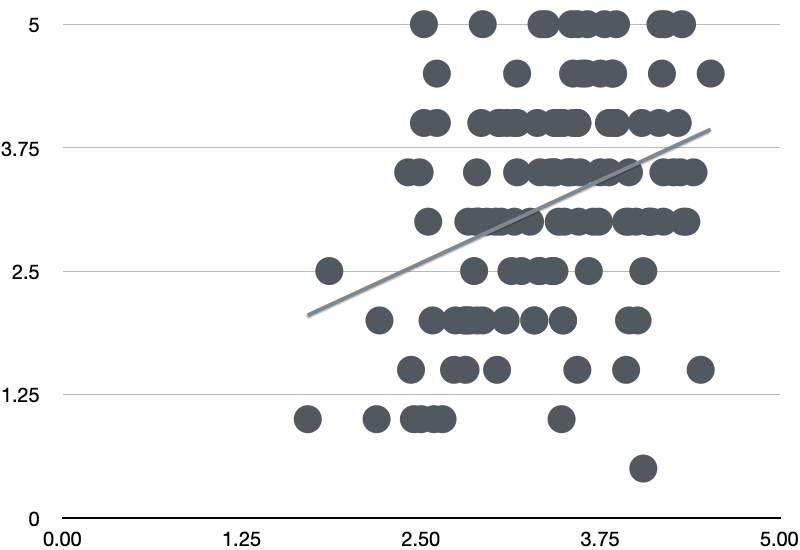

- Maxime Davide has set up a site to benchmark lambda cold starts on different platforms. The fastest is for C++ with ~12ms, Graal at 124ms, and Java at around 200ms. Weirdly, Java using Snapstart is the slowest of all at >=600ms (depending on Java version). This is counter-intuitive and there is an open issue raised about it.

- Yan Cui, who writes on AWS as theburningmonk, posted a ‘hot take’ on Linked-In suggesting that people worry too much about cold starts: “for most people, cold starts account for less than 1% of invocations in production and do not impact p99 latencies“. He goes on to warn against synchronously calling lambdas from other lambdas(!), and discusses how traffic patterns affect initialisation.

- There’s an excellent article from Yan Cui that digs further into this question of traffic patterns, I’m afraid you’re thinking about AWS Lambda cold starts all wrong. This looks at Lambdas in relation to API Gateway in particular, but makes the point that concurrent requests to a lambda can cause a new instance to be spun up, which then causes the cold start penalty for one of the requests.

- This article goes on to suggest ‘pre-warming’ lambdas before expected spikes as one option to limit the impact, possibly even short-circuiting the usual work of that lambda for these wake-up requests. This article also suggests making requests to rarely-used endpoints using cron to keep them warm. This article is from 2018, so does not take account of some of the newer solutions – although I’ve seen this idea of pinging lambdas used recently as a quick-and-dirty solution.

- It’s easy to get Graal working with Spring boot, producing an executable that can be run by AWS lambda. This gets the cold start of Spring Boot down to about 500ms, which is quite impressive – although still larger than many other platforms. Nihat Önder has made a github repo available.

- However, the first execution of the Graal/Spring Boot demo after the cold start adds another 140ms, which tips this well over the threshold of what is acceptable. I’ve read that there are issues with lazy loading in the AWS libraries which I need to dig into.

- Given the ease of using languages like Typescript, it’s hard to make a case for using Java in AWS Lambda when synchronous performance is important – particularly if you’re building simple serverless functions rather than using huge frameworks like Spring Boot.

Next steps

Before going too much further into this, I should try to produce some simple benchmarks, looking at a trivial example of a Java function, comparing Graal, the regular Java runtime and Snapstart. This will provide an idea of the lower limits for these start times. It would also be useful to look at the times of a lambda that accesses other AWS services such as one that queries S3 and DynamoDB, to see how this more complicated task affects the cold start time.

Given a benchmark for a more realistic lambda, it’s then worth thinking about how to optimise a particular function. Using more memory should help, for example, as should moving complicated set-up into the init method. How much can a particular lambda be sped up?

It’s also worth considering what would be an acceptable response time for a lambda endpoint – noting that this depends very much of traffic patterns. If only 1-in-100 requests have a cold start, is that acceptable? What about for a rarely-used endpoint, which always has a cold start?